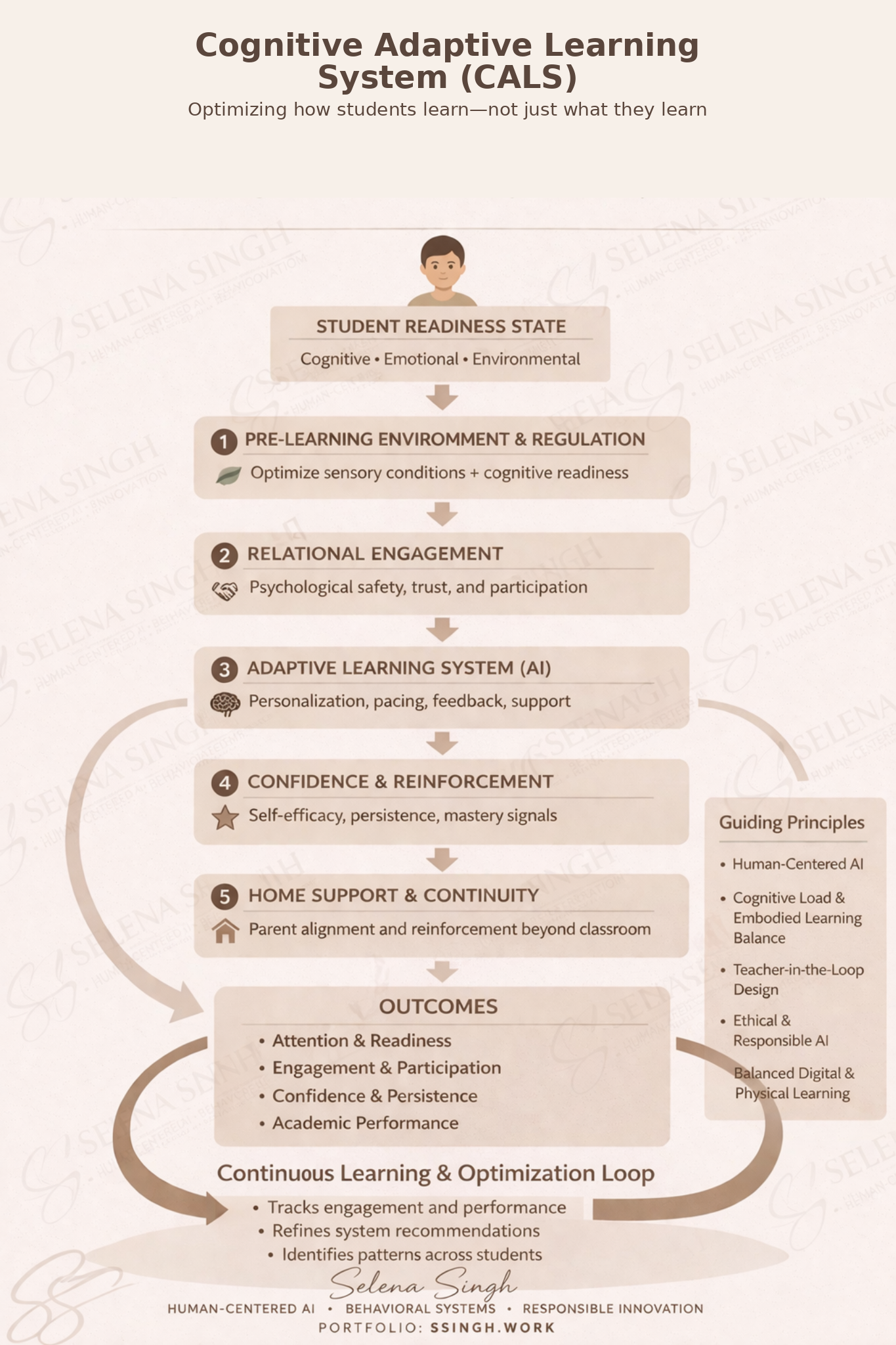

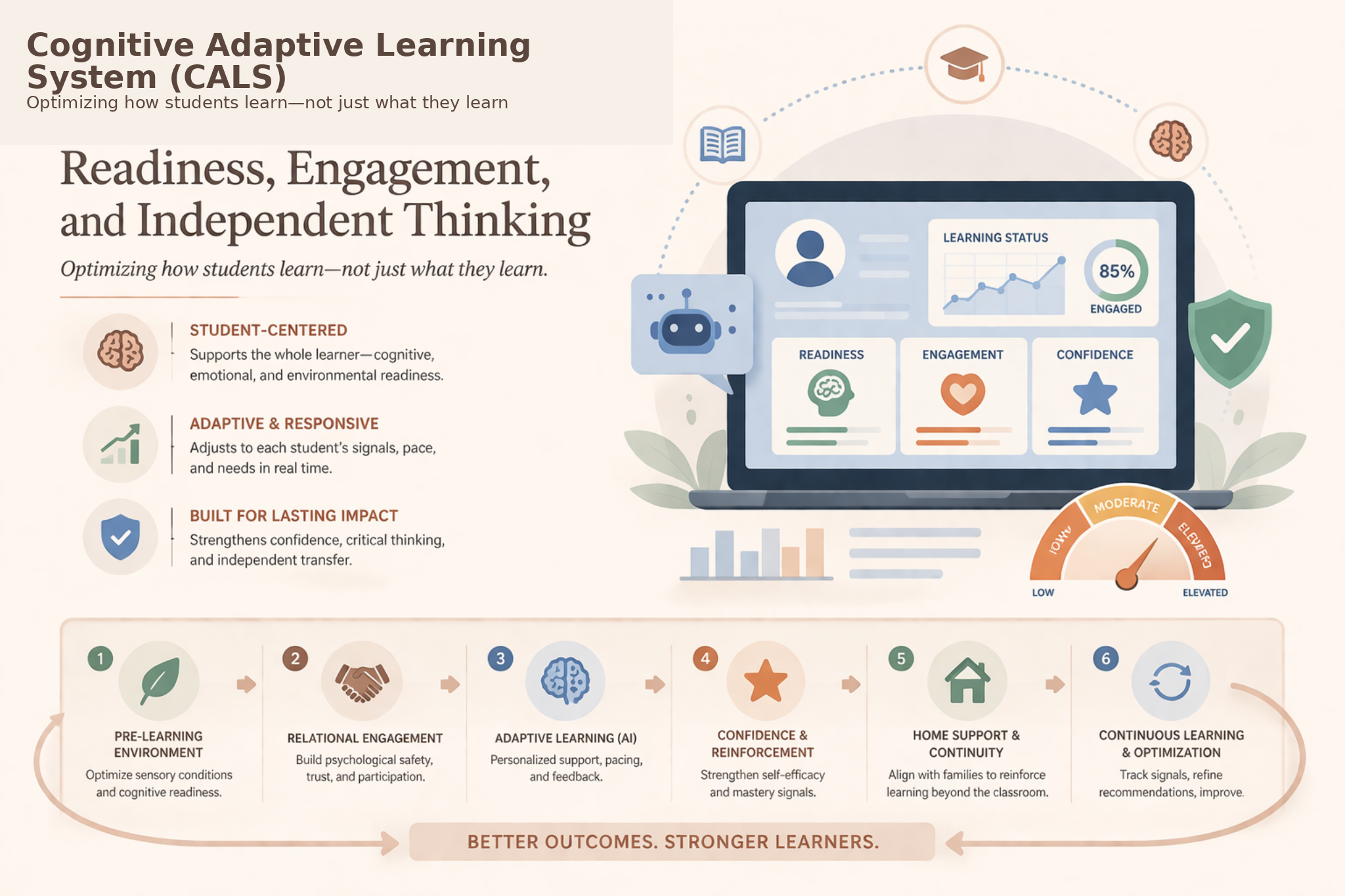

Cognitive Adaptive Learning System (CALS): A Human-Centered Framework for Readiness, Engagement, and Independent Thinking

Overview

Developed Cognitive Adaptive Learning System (CALS)

A human-centered framework designed to improve readiness, engagement, and independent thinking in AI-supported study environments.

This work introduces a behavioral and governance-driven framework that structures how students interact with AI, ensuring that AI supports learning without replacing reasoning.

The system operationalizes principles from human–computer interaction, cognitive psychology, and education research into measurable behavioral signals and adaptive system logic.

Problem

AI is increasingly integrated into learning environments, yet most systems:

prioritize answer generation over learning process

allow unrestricted AI interaction, increasing dependency risk

fail to account for student readiness, attention, and engagement

rely on self-reported understanding rather than demonstrated learning

lack governance mechanisms to prevent over-reliance and automation bias

These gaps create conditions where AI may improve efficiency while unintentionally weakening independent thinking and long-term learning outcomes.

Framework

Developed a human-centered AI learning framework that models learning as a behavioral system rather than a purely instructional process.

The framework is structured across:

Readiness Activation – preparation of cognitive and emotional conditions for learning

Relational Engagement – support for participation and psychological safety

Adaptive Learning with AI – structured AI-assisted support based on context

Critical Thinking with AI – guided interaction to improve question quality and evaluation

Confidence & Reinforcement – support for persistence and engagement

Independent Thinking & Transfer – validation of understanding beyond AI assistance

Continuous Feedback & Governance – adaptive system behavior based on observed signals

This framework ensures that AI interaction is shaped by human learning conditions, not just content delivery.

Approach

System Design & Simulation

Designed and implemented a structured learning system using simulated student inputs, including:

readiness, confidence, and engagement levels

task complexity

baseline prompting behavior and transfer performance

Readiness Activation Modeling

Simulated a pre-learning activation phase to improve cognitive readiness and reduce overload:

modeled increases in readiness, confidence, and engagement

incorporated flexible student behavior rather than rigid compliance

aligned system flow with real-world learning conditions

Structured AI Interaction

Developed a constrained AI interaction model:

adaptive prompt support levels based on readiness and task complexity

pre-thinking requirement before prompting

evaluation of AI responses rather than passive acceptance

Critical Thinking & Behavioral Evaluation

Tracked how students interact with AI:

prompt efficiency and redundancy

response evaluation behavior

intentional vs reactive prompting patterns

Independent Thinking & Transfer Validation

Required students to demonstrate understanding beyond AI:

explanation in their own words

application of knowledge to new problems

This layer serves as the system’s primary validation mechanism for learning.

Governance & Bias Mitigation

Embedded governance directly into the system:

multi-signal evaluation (not reliant on self-report or AI output alone)

behavioral validation through observed interaction patterns

no rigid labeling or fixed ability categorization

transparent system signals with human-in-the-loop interpretation

Key Tradeoffs Identified

Identified critical system-level tradeoffs shaping learning outcomes:

Structured Support vs. Over-Restriction

AI Assistance vs. Independent Thinking

Efficiency vs. Cognitive Engagement

Personalization vs. Dependency Risk

Findings

Readiness activation improved baseline engagement, confidence, and task initiation

Structured prompting reduced redundant AI interaction and improved question quality

Students demonstrated stronger independent explanation and transfer performance

Higher-quality AI interaction correlated with lower dependency scores

Multi-signal evaluation revealed gaps between perceived and actual understanding

These findings show that structuring AI interaction improves learning outcomes without removing access to AI.

Outcome

Developed a scalable human-centered AI learning system grounded in behavioral and cognitive principles

Designed a repeatable framework for evaluating and structuring AI-supported learning environments

Demonstrated how AI can be integrated without degrading independent thinking

Established a governance-aware approach to mitigating dependency and automation bias in education

Key Capabilities Demonstrated

AI Governance & Responsible AI Design

Behavioral Systems Modeling in Learning Environments

Human–AI Interaction Design (HCI)

Educational Systems Strategy

Adaptive System Design & Evaluation

Cognitive & Behavioral Signal Analysis